I absolutely love this Apple ad …

Whenever it comes on, I completely stop whatever it is I’m doing and watch it.

There is another ad I love almost as much, and its this one by google

What I love about these ads is how they tell you a story.

One in which the product is just a supporting actor.

A story of how their technology fits into your life and makes it awesome.

You see, some people can relate to wanting to have a cooler phone than the other person.

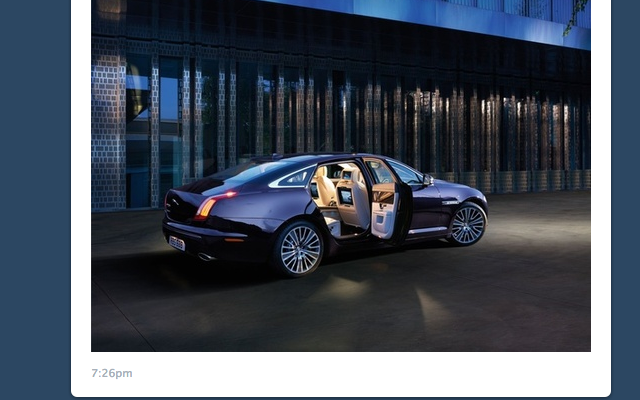

Some people can relate to having a more expensive car or getting a table at that impossible-to-get-reservations restaurant.

Yeah, people get off on having stuff that no-one else has.

But that is a small subset of human aspiration.

The things people really want are usually much simpler than that.

People want to see things they’ve never seen before, go places they’ve never been, hang out with people they like, eat awesome food, have someone think they’re attractive … even sexy … then share all those with people they know

People want to make their friend laugh, have them think they’re a riot.

People really just want simple things things most of the time.

So you can actually make your product huge by showing people how it helps them do the little things.

How you seamlessly fit into their life and make it awesome.

If you can do that … You’ve won.

Thats how facebook got big.

Thats how instagram got big.

Thats how the ipad got big.

They are personal awesomeness force multipliers.

As an example airbnb.com, shows you breathtakingly beautiful places that it has listed when you arrive at the site.

They fade in and out in the background.

Pazin, Croatia. Barcelona, Spain. Copenhagen, Denmark.

Beautiful homes, in beautiful places. for $80 a night!

It makes your imagination run wild. You start to wonder …

“A plane ticket would be … ,”

“If I stayed 2 nights I could do this for less than …”

You start to create your own story in your head. because you can’t help it, you’re human.

And without even seeing a single ad, you’re hooked.

Because airbnb can fit into your life and make it way awesome.

So the question if you’re a product maker is … Whats your story?

How does your thing seamlessly fit into my life and make it awesome?

Relevant: Want to Increase a Product’s Value by 2,706%? Give It a Story